How to know if you're actually ready to automate

Readiness is not enthusiasm. Here's the diagnostic most operators skip — and why it costs them.

In 2019, a mid-size regional hospital network deployed a fleet of autonomous mobile robots to handle medication cart transport between pharmacy and nursing floors. Six months later, the fleet was idle. Not because the robots failed mechanically — they navigated corridors fine. The failure was upstream: the medication dispensing workflow was inconsistently documented across three different nursing units, the pharmacy's handoff process changed daily depending on shift supervisor, and the robot's route scheduling system had no way to handle the exceptions that made up roughly 30% of all runs. The technology was sound. The environment it was dropped into was not ready for it.

This is the canonical first-deployment failure. And it repeats — in warehouses, factories, hotels, and farms — because operators assess their automation readiness the wrong way. They ask "is this robot good enough?" when the diagnostic question is "is this operation stable enough?"

Readiness is a property of your operation, not the vendor's product.

The Four Gates

Every first deployment must pass four readiness gates before a purchase makes sense. These are not sequential — they're parallel conditions. All four must be true. One failing gate is sufficient to recommend waiting.

Gate 1: Process Stability

A robot automates a process. If the process itself is unstable — if steps vary by operator, shift, or day of the week — the robot will encounter edge cases it wasn't designed for constantly. This is the single largest predictor of first-deployment failure across sectors.

The diagnostic: Pick the specific process you're considering automating. Shadow the people who perform it right now. Ask: does every operator perform this process the same way? Do the steps change based on conditions the robot can't observe? What percentage of runs involve exceptions or workarounds?

If exception rate is above 15%, fix the process first. A robot can handle variation within a designed range. It cannot handle a process that isn't itself designed.

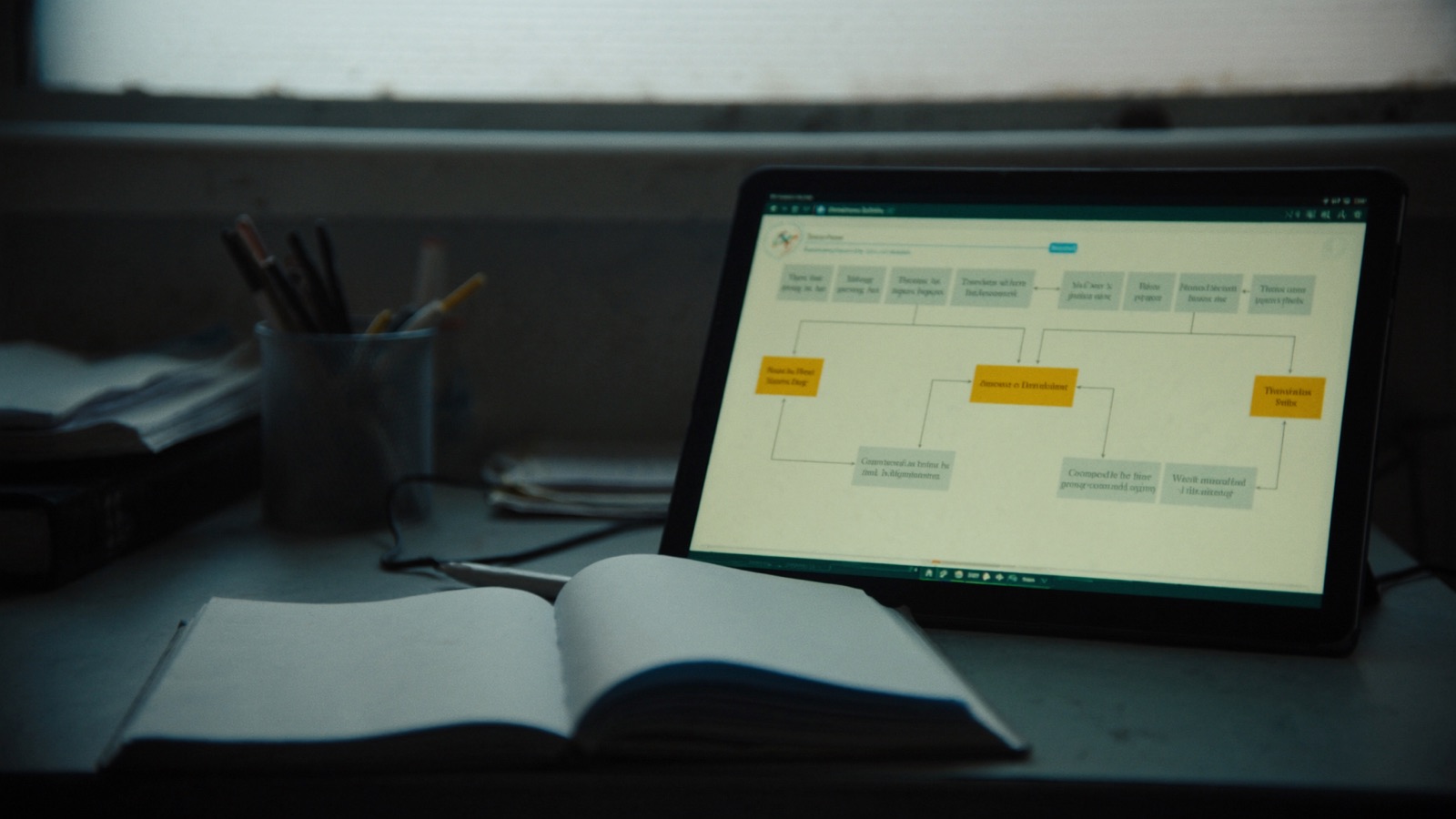

A useful test from operations research: if you cannot write down the process as a flowchart that two different operators would follow identically, you cannot automate it yet.

Red flag: You're hoping the robot will "force" the process to become consistent. It won't — it will fail repeatedly on the inconsistency and be blamed for it.

Gate 2: Data and Measurement Infrastructure

Robots generate data. They also depend on data. Both directions require infrastructure you may not have.

The first question is whether you can measure the current baseline. A robot's business case depends on improvement over baseline. If you don't know your current throughput, cycle time, labor hours, or error rate on the target process, you cannot prove the robot is helping. This sounds obvious. Most operators cannot answer it when pressed.

The second question is connectivity. Every modern autonomous robot — mobile or fixed — requires reliable network connectivity, typically WiFi or 5G. Signal strength, handoff between access points, interference from industrial equipment, dead zones near thick structural elements — these are infrastructure problems, not vendor problems. A robot operating in poor RF conditions will perform unreliably and be blamed as defective.

The diagnostic:

- Run your current baseline metrics for 30 days before any vendor engagement. Hours per unit, error rate, cycle time. If you don't have the systems to collect this, that's the first investment.

- Have an IT contractor produce an RF heat-map of the deployment zone. This takes a few hours and costs a few hundred dollars. It tells you exactly where signal dead zones are.

Red flag: You're planning to measure ROI "once the robot is running." Baseline measurement before deployment is non-negotiable.

Gate 3: Infrastructure Physical Readiness

Beyond connectivity, the physical environment must accommodate the specific robot type you're deploying. This varies by category:

| Robot type | Key infrastructure questions |

|---|---|

| Autonomous mobile robots (AMRs) | Floor flatness, aisle width, charging station placement, elevator integration if multi-floor |

| Fixed-arm cobots | Workstation height and clearance, fixture design, safety cage or collaborative zone layout |

| Outdoor agricultural robots | GPS signal quality, field terrain mapping, power/charging in the field |

| Cleaning robots | Floor surface type, obstacle consistency, service corridor width |

| Delivery/logistics robots | Loading dock design, rack height and spacing, dock-leveler clearance |

None of these can be assessed from a vendor brochure. They require a site visit from someone — the vendor, an integrator, or an independent consultant — who has deployed that specific robot type in a comparable environment.

The diagnostic: Before signing any purchase agreement, require a formal site assessment in writing. This should include a list of infrastructure prerequisites with a pass/fail for each. Any prerequisite that fails is either a pre-condition for deployment or a reason to not proceed.

Red flag: Vendor is willing to sign a contract without doing a site assessment. That is a vendor protecting its commission, not your deployment.

Gate 4: Organizational Readiness

Technology deployments fail at the organizational layer more often than the technical one. Three conditions matter here.

An accountable owner. The deployment needs a single named person who is accountable for its outcome — not a committee, not "the operations team." This person sets up KPIs, runs the weekly stand-up, reviews incident logs, and makes the go/no-go recommendation at 90 days. Without this person, nobody does the unglamorous mid-deployment work of measurement and iteration.

Change management capacity. Automation changes workflows for the people around the robot. Those people need to understand what changes, why, and what it means for their work — before day one, not after. This takes real calendar time. If your operations team is already maxed out, the deployment will consume capacity it doesn't have, and shortcuts will be taken on change management. Shortcuts on change management kill pilots.

Budget for integration and iteration, not just hardware. First-time buyers routinely budget for the robot and nothing else. Real first-deployment costs include: installation and site modifications (10–30% of hardware cost), staff training time, software integration with existing systems (WMS, ERP, CMMS), and an operational contingency for the first 90 days when throughput typically dips before it improves. Projects that budget only for hardware hit their first unexpected cost and pause — then stall — then get cancelled.

The diagnostic: Ask your leadership team three questions:

- Who is the single named owner of this deployment, with authority to make decisions?

- What is the change management plan for affected staff before day one?

- What is the budget for integration, site modifications, and 90-day operational support — separate from the hardware cost?

If any of these three doesn't have a clear answer, organizational readiness is not there yet.

The Readiness Matrix

Here is a simple 2x2 for where you likely sit after running these four diagnostics:

| Process stability | Infrastructure + org readiness | Recommendation |

|---|---|---|

| High | High | Go. Run a time-bounded pilot with written KPIs. |

| High | Low | Fix infrastructure first. Budget and timeline before hardware. |

| Low | High | Stabilize the process before automating. 3–6 months of process improvement first. |

| Low | Low | Not ready. Premature automation makes chaotic operations faster at failing. |

The hardest quadrant to sell to leadership is "low process stability, high org readiness." Organizations that are well-run and eager to invest often assume their operational competence transfers directly to automation deployment. It does — eventually. But the process must be documented, stable, and measurable before the robot can help with it.

What "Ready Enough" Actually Looks Like

Readiness is not perfection. Every first deployment will encounter surprises. The question is whether you have the infrastructure and organizational capacity to respond to surprises productively rather than reactively.

A reasonable readiness threshold:

- The target process has been documented and followed consistently for at least 60 days

- You can measure the baseline for at least two KPIs right now, without any new systems

- A site assessment has been completed and all infrastructure prerequisites are met or have funded remediation plans

- A named deployment owner is designated and has 20% of their time allocated to the pilot

- The budget includes hardware + 30% for integration and first-90-day support

If you clear all five, you are ready to engage vendors seriously.

If you're missing one or two, most gaps can be closed in 30–90 days with focused effort. The exception is process instability — that typically requires 3–6 months of disciplined process improvement before automation is viable.

The Cost of Deploying Before You're Ready

Operations leaders feel pressure to automate — from labor costs, from competitive positioning, from the technology's visibility in the trade press. That pressure is real. But deploying before the four gates are cleared doesn't accelerate automation. It produces a failed deployment, staff skepticism that can take years to overcome, and a budget conversation with finance that makes the next deployment harder to fund.

The most expensive automation project is the one that fails and then creates organizational resistance to the second attempt.

Running this diagnostic costs nothing. Running it honestly — without optimism bias — takes about two weeks of internal assessment. That two weeks is the cheapest investment you can make in your automation program.

If you clear the gates, the next question is whether robots or additional headcount is the right answer. That's the subject of the next article in this series.