Surgeon Training Curve and Proctoring: The 18-Month Onboarding Window

The clinical and financial risks of robotic surgery are highest in the first 25–50 cases. Here is how institutions manage that window without sacrificing patient outcomes or surgical throughput.

A 2015 analysis in the British Journal of Urology International examined the learning curve for robotic-assisted radical prostatectomy at a new robotic program and found that operative time did not stabilize to experienced-surgeon levels until case 40–60. In the early case series — cases 1 through 20 — operative time ran approximately 40% longer than the equivalent open procedure. Complication rates were also higher in the early series before declining toward the rates achieved by experienced robotic surgeons.

That analysis was not an argument against robotic surgery. It was an argument for taking the learning curve seriously as a clinical and operational variable — one that has a defined shape, a defined duration, and a defined set of interventions that either accelerate or prolong it.

For hospital administrators and OR directors commissioning a new robotic program, understanding the learning curve is not a clinical detail. It is a throughput problem, a credentialing liability, and a financial variable that sits inside your ROI model whether you account for it or not.

What "Learning Curve" Actually Means

The term learning curve in surgical literature refers to the number of cases required for a surgeon to reach a defined threshold of performance — usually a combination of operative time, intraoperative complication rate, and conversion rate (cases converted from robotic to open or laparoscopic approach mid-procedure).

The peer-reviewed literature on robotic surgery learning curves shows a wide range, and the range is real rather than imprecise:

| Procedure | Published learning curve range | Most common cited threshold |

|---|---|---|

| Radical prostatectomy | 20–150 cases | 20–40 cases (functional competence) |

| Robotic hysterectomy | 20–50 cases | 20–30 cases |

| Robotic colorectal resection | 30–70 cases | 40–50 cases |

| Robotic cholecystectomy | 10–30 cases | 15–20 cases |

| Robotic lobectomy | 30–80 cases | 40–50 cases |

| Robotic cystectomy | 30–100 cases | 50+ cases |

The wide range reflects real sources of variation: the surgeon's prior laparoscopic experience, the complexity of the specific procedure, the quality of the training curriculum completed before first clinical case, and the volume of cases per month during the learning period (faster case accumulation shortens the calendar duration of the curve).

A surgeon doing five robotic cases per month will complete a 40-case learning curve in eight months. The same surgeon doing one case per month will take three years to accumulate the same experience — with a much longer window of elevated risk.

The Three Phases of the Onboarding Window

Phase 1: Pre-clinical training (weeks 1–8)

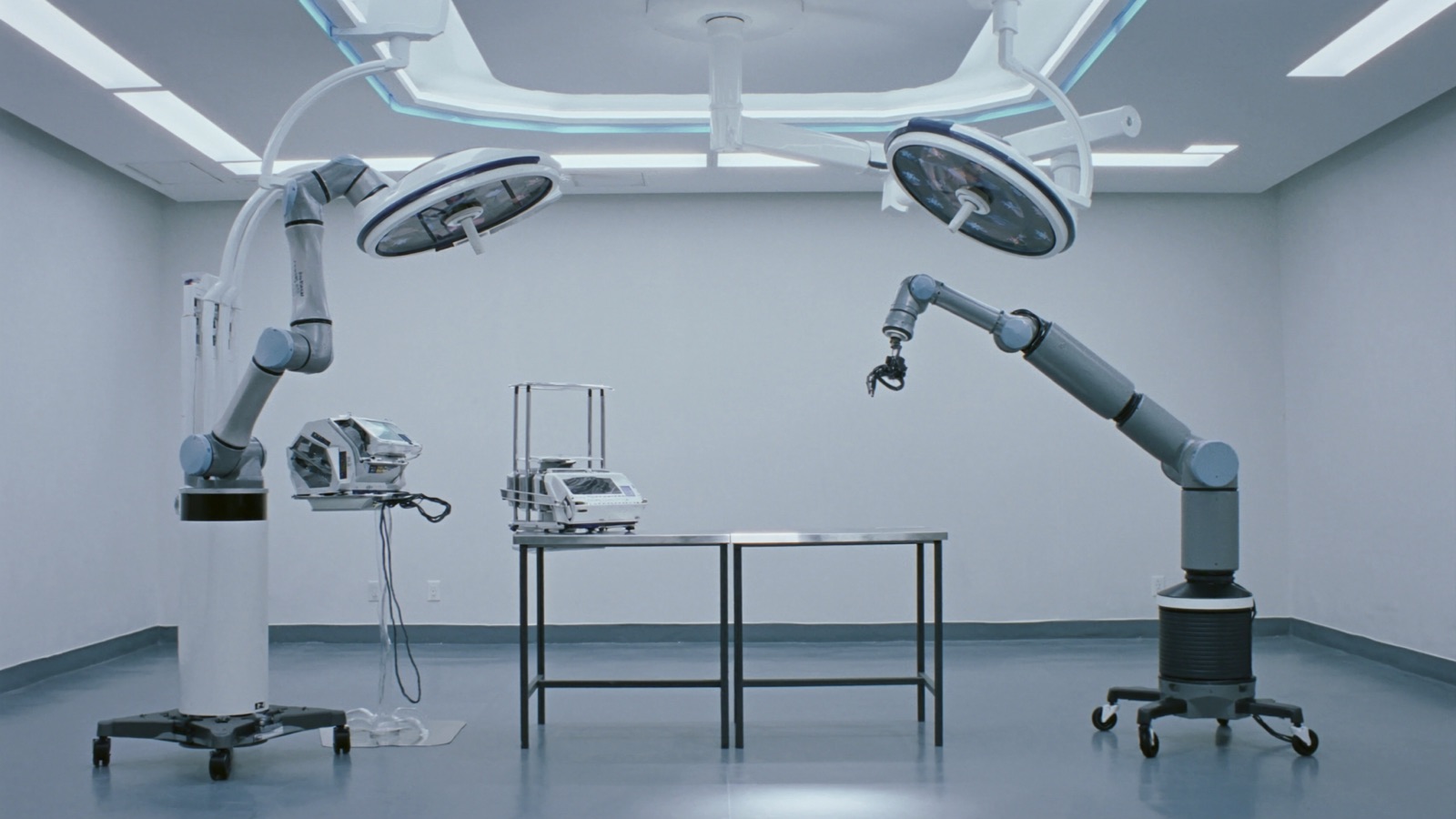

This phase happens before the surgeon touches a patient. The Intuitive training pathway — the most formalized in the industry — includes:

- Online learning modules (anatomy, platform operation, robotic ergonomics)

- Dry-lab simulation on the da Vinci console with virtual reality task modules

- Wet-lab cadaveric dissection under an experienced robotic surgeon-educator

- Bedside assistant training for the OR team that will support the surgeon's cases

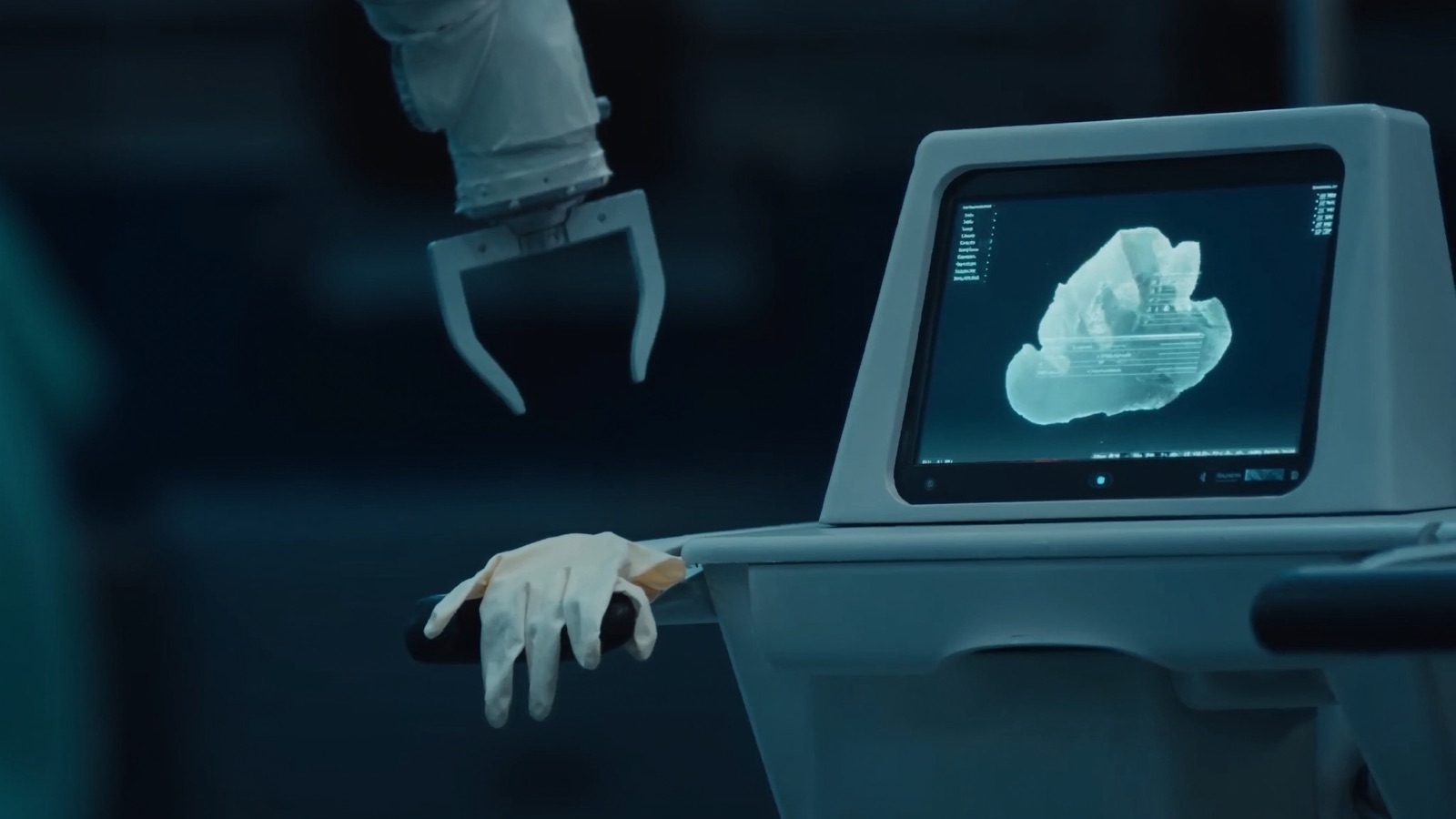

The VR simulation component deserves specific attention. Published evidence supports VR simulator proficiency as a predictor of early clinical performance — surgeons who achieve proficiency on validated simulation tasks before their first clinical case show shorter operative times and lower error rates in their initial case series. The simulation is not optional enrichment; it is a meaningful safety intervention.

The American College of Surgeons and specialty societies (AUA, AAGL, AATS) have published credentialing guidelines that specify minimum pre-clinical training requirements for their respective procedures. Review these before writing your credentialing policy.

Phase 2: Proctored clinical cases (cases 1–15+)

A proctor is an experienced robotic surgeon — typically with 100+ robotic cases in the relevant procedure — who supervises the learning surgeon's clinical cases and is available to take over if patient safety requires it. The proctor is present in the OR, not watching on a monitor.

Key distinctions:

- Preceptor role: more hands-on; may step in for specific technical maneuvers, functioning as a teaching presence

- Proctor role: supervises and certifies; intervenes only for safety; their presence certifies that the surgeon has achieved an acceptable performance standard

Proctoring requirements vary by specialty society and institution. The European Hernia Society, as a published example, has described a da Vinci credentialing pathway that requires a minimum number of proctored procedures before independent privileging. Urology programs typically require 5–10 proctored prostatectomies before independent practice, though hospital credentialing committees set the specific numbers.

Proctoring is expensive in practical terms. The proctor cannot bill for their time in the OR. They must travel to your facility or be a colleague at your institution with the relevant experience. External proctors are typically compensated by vendors (Intuitive has a proctor program) or by institutional arrangement. Understand who is paying the proctor and whether that creates any conflict of interest.

Phase 3: Supervised independence (cases 15–50)

After formal proctoring concludes, the surgeon is independently privileged but their outcomes should be tracked through your quality program. Complication rates, conversion rates, and operative times during this phase should be reviewed against benchmarks. This is not punitive surveillance — it is normal outcomes monitoring that any new program should have in place.

The transition from Phase 2 to Phase 3 is the highest-risk moment in the onboarding timeline. The proctor has certified the surgeon, the administrative oversight has concluded, but the surgeon has not yet accumulated the case volume to reach stable performance. Programs that do not maintain quality monitoring through Phase 3 miss the period when complications, if they occur, are most likely to appear.

The OR Team Learning Curve — The Overlooked Variable

Surgeons are not the only ones on a learning curve.

The scrub technician who manages the instrument table for robotic cases, the circulating nurse who manages cable and arm positioning, the anesthesiology team managing patient positioning in steep Trendelenburg for pelvic robotic cases — all of them have a learning curve too. Research on robotic OR team learning shows that setup time and team-driven errors peak in the first 20–30 cases a given team performs together, then decline as team coordination improves.

This means that a surgeon who has completed 100+ robotic cases at another institution and joins your facility does not bring a trained OR team with them. Your team starts from zero. The surgeon's operative time may be mature, but OR efficiency will still be suboptimal until your team accumulates experience.

Practical implications:

- Assign a consistent robotic OR team to all training-phase cases — same scrub tech, same circulator, same anesthesiologist where possible

- Track team setup time separately from surgeon operative time

- Do not rotate inexperienced team members into a case when the surgeon is also early in their curve — the compounded learning effect is dangerous

How Simulation Accelerates the Curve

Every major robotic platform now has an associated virtual reality simulator. Intuitive's da Vinci Skills Simulator is the most widely studied; evidence from multiple published trials supports it as a validated training tool with predictive validity for early clinical performance.

The critical insight: simulator proficiency achieved before the first clinical case compresses the early plateau of the learning curve. Surgeons who reach proficiency benchmarks on validated simulator tasks (camera control, clutching, instrument exchange, basic suturing) in the pre-clinical phase perform measurably better in their first 10 clinical cases than surgeons who go directly to clinical cases without simulation.

For an OR director or program administrator, this translates to a specific policy commitment: no surgeon should perform a first robotic clinical case without documented simulator proficiency. This is not currently a universal standard — many programs have not formalized it — but the evidence supporting it is consistent.

Credentialing Infrastructure Your Hospital Must Have

Before your first robotic case, your institution should have in place:

A written robotic credentialing policy that specifies minimum training requirements (pre-clinical hours, simulator tasks, proctored case numbers), privileging criteria for initial independent practice, and the re-credentialing or performance review standard that applies if outcomes fall outside acceptable ranges.

A quality monitoring protocol covering the first 50 independent cases for any newly credentialed robotic surgeon: operative time tracking, conversion rate tracking, complication rate tracking, with defined thresholds that trigger peer review.

A proctor sourcing plan for each specialty you are launching. Know in advance who is proctoring your first urologic cases, your first gynecologic cases, your first colorectal cases. Do not leave this to vendor arrangement without understanding the vendor's financial relationship with the proctor.

A simulation access policy ensuring that surgeons in training have scheduled, supervised access to your simulator — not just ad-hoc access when the OR suite is not in use.

The 18-Month Horizon

The title of this article uses an 18-month window because that is a reasonable planning horizon for a new robotic program from contract signing to a fully operational, stable-performance program:

- Months 1–2: installation, team training, OR infrastructure

- Months 3–4: pre-clinical surgeon training and simulation

- Months 5–8: proctored cases, Phase 1–2 learning curve

- Months 9–14: supervised independence, Phase 3 curve

- Months 15–18: first full quarter of mature operation, initial outcomes review

Programs that expect a robotic platform to be fully economically productive in months 6–9 after installation consistently underestimate this window and produce budget variances that look like robot failures but are actually onboarding realities. Build 18 months of ramp-up costs into your capital commitment model before the purchase decision.

Next in this series: Pilot to Scale — Case Volume Thresholds for ROI