GPS-RTK, Computer Vision, or Both — Choosing the Localization Stack

Your crop type and operating environment determine which positioning approach is viable, not which is technically superior.

A 1,000-acre wheat operation in Kansas and a 40-acre strawberry farm in coastal California both want autonomous robots in their fields. The Kansas operation can use RTK GPS with high confidence: open sky, flat terrain, no canopy, predictable row structure. The coastal strawberry farm cannot rely on RTK GPS alone: morning fog degrades satellite signal quality, the raised-bed structure means the robot must track individual plant positions within a row, and the dense canopy as the season progresses blocks the clear sky view RTK requires for centimeter accuracy.

These two operations need different localization stacks. Choosing a robot system designed around the wrong approach for your environment is one of the most common — and most expensive — mistakes in ag robot procurement.

This article breaks down how each approach works, where it fails, and how to think about fusion systems that combine both.

What Localization Means for Agricultural Robots

In robotics, localization is the question: where am I, and where am I pointing? In agriculture, this resolves to two sub-problems:

- Field-level positioning: Where is the machine in the field? Which row, which position along the row?

- Plant-level positioning: Exactly where is the target (weed, crop, spray point) relative to the machine's working tools?

For autonomous tractors following pre-defined passes, field-level positioning is sufficient. For implement systems that trigger laser pulses, robotic arms, or individual spray nozzles at specific plant locations, plant-level positioning is required. These are meaningfully different engineering challenges.

GPS-RTK: How It Works and Where It Fails

How it works:

Real-Time Kinematic GPS corrects the satellite positioning signal using a ground reference station whose precise coordinates are known. The correction signal (transmitted via radio or cellular) reduces positioning error from the ~3-meter standard GPS level to approximately 1–2 centimeters horizontal accuracy under ideal conditions.

For row-crop applications, 2cm accuracy is generally sufficient for autonomous passes — the robot can follow a programmed track within a row with enough precision to avoid crop damage on most row spacings above 20 inches.

Where RTK excels:

- Open-field row crops: corn, soybeans, wheat, sorghum, cotton

- Vineyards with consistent inter-row spacing

- Orchard operations where the machine drives inter-row (not within canopy)

- Any operation where the task is repeatable path-following without real-time plant discrimination

Naïo Technologies' Oz navigates vineyards and market garden rows using RTK GPS as the primary positioning source, with the robot following pre-mapped row coordinates. John Deere's guidance and autonomy systems for conventional tractors are primarily RTK-based. Monarch Tractor's MK-V uses RTK as the foundation for autonomous tractor operation.

Where RTK fails:

- Heavy canopy: As crop canopy closes over the row in tall crops or dense vine systems, multipath interference and signal attenuation reduce accuracy. This is the primary limitation for in-canopy orchard and vineyard operations late in the season.

- Deep topographic features: Valley floors, hillside cuts, and operations near terrain features that mask portions of the sky degrade satellite geometry.

- Coastal signal environments: Coastal fog does not block satellite signals (L-band passes through water vapor), but ionospheric disturbances in some coastal regions can increase positioning errors.

- RTK base station range: For rover-to-base RTK without a correction network, accuracy degrades significantly beyond ~25 km from the base station. Farms far from commercial network infrastructure may need their own base station.

- Precisely what it cannot do: RTK tells you where the machine is. It does not tell you where the weeds are within the row, where the damaged fruit is on the plant, or where the row is if the physical row has drifted from the pre-mapped coordinate due to soil heave or re-bedding.

Signal correction options:

| Option | Accuracy | Cost |

|---|---|---|

| SBAS (Starfire, WAAS) | ~10–30 cm | $0–$500/yr subscription |

| Cellular RTK correction network | 2–4 cm | $1,500–$4,000/yr |

| UHF radio base station | 1–2 cm | $8,000–$20,000 capital |

| Physical base station (own) | 1–2 cm | $8,000–$20,000 capital, $0 ongoing |

For operations already running precision planting, variable rate application, or guidance on conventional tractors, you may already have an RTK correction source that can serve the robot as well — confirm compatibility before purchasing a second subscription.

Computer Vision: How It Works and Where It Fails

How it works:

Machine vision localization uses cameras — typically RGB, depth (stereo or structured light), or multispectral — combined with trained neural network models to identify the robot's environment and the specific targets within it. Rather than asking "where am I according to satellites?", vision asks "what do I see, and what does that mean about where I am and what I should do?"

For agricultural applications, vision does two things:

- Navigation: Follow the row by tracking the crop line or the bed edge. Stop when an obstacle appears. Identify headland and turn.

- Detection: Find weeds within the row and distinguish them from crop plants. Identify ripe fruit. Detect disease or pest pressure. Evaluate spray coverage.

Carbon Robotics' LaserWeeder uses vision as its primary mode: the system detects each weed in real time and fires laser pulses at weed centers. FarmWise's Titan FT-35 uses vision to navigate rows and identify individual plant positions for its mechanical cultivation implement. Bonsai Robotics uses vision-based autonomy for orchard operations, specifically targeting the challenge of tree-nut harvest in California and Australia.

Where vision excels:

- Plant-level discrimination (weed vs. crop, ripe vs. unripe, healthy vs. diseased) — this is fundamentally a vision task that GPS cannot do

- Navigation under canopy where GPS signal is degraded

- Operations with irregular geometry that doesn't map cleanly to pre-defined coordinates

- Environments with high biological variance that requires real-time interpretation

Where vision fails:

- Low-contrast conditions: Early morning, evening, overcast days, and foggy conditions reduce the contrast between soil, crop, and weed that most models rely on. Systems trained on mid-day imagery often degrade significantly at dawn.

- Dust and debris: Vision sensors in dusty field conditions require regular cleaning (sometimes multiple times per day). Dust is the primary cause of unplanned stops in mechanically cultivated sandy-soil environments.

- Crop variety generalization: A model trained on one lettuce variety may not generalize to a different variety with different leaf morphology. Each new crop or variety introduction requires new training data and model validation — a cost in time and data collection that operators rarely budget for.

- High-speed operation: Most vision systems operate reliably at field speeds up to 3–5 mph. At 8–10 mph (useful for large-acreage row crops), frame rate, processing latency, and blur become limiting factors.

- At night: Some systems have IR illumination for night operation, but most are designed for daylight.

Sensor Fusion: The Hybrid Approach

Most current-generation commercial ag robots fuse RTK and vision rather than relying on one alone. The architectures vary:

RTK-primary, vision-secondary: RTK provides field-level positioning and primary navigation. Vision handles plant-level tasks (weed detection, obstacle avoidance, row-end detection). Naïo's newer systems use this approach: RTK for the row-to-row navigation, vision for in-row object handling.

Vision-primary, RTK-secondary: Vision handles both navigation and detection. RTK provides a fallback position check and geo-referencing for the data log. Carbon Robotics' LaserWeeder uses this architecture — the laser targeting is vision-driven, but position data is geo-referenced for field record keeping.

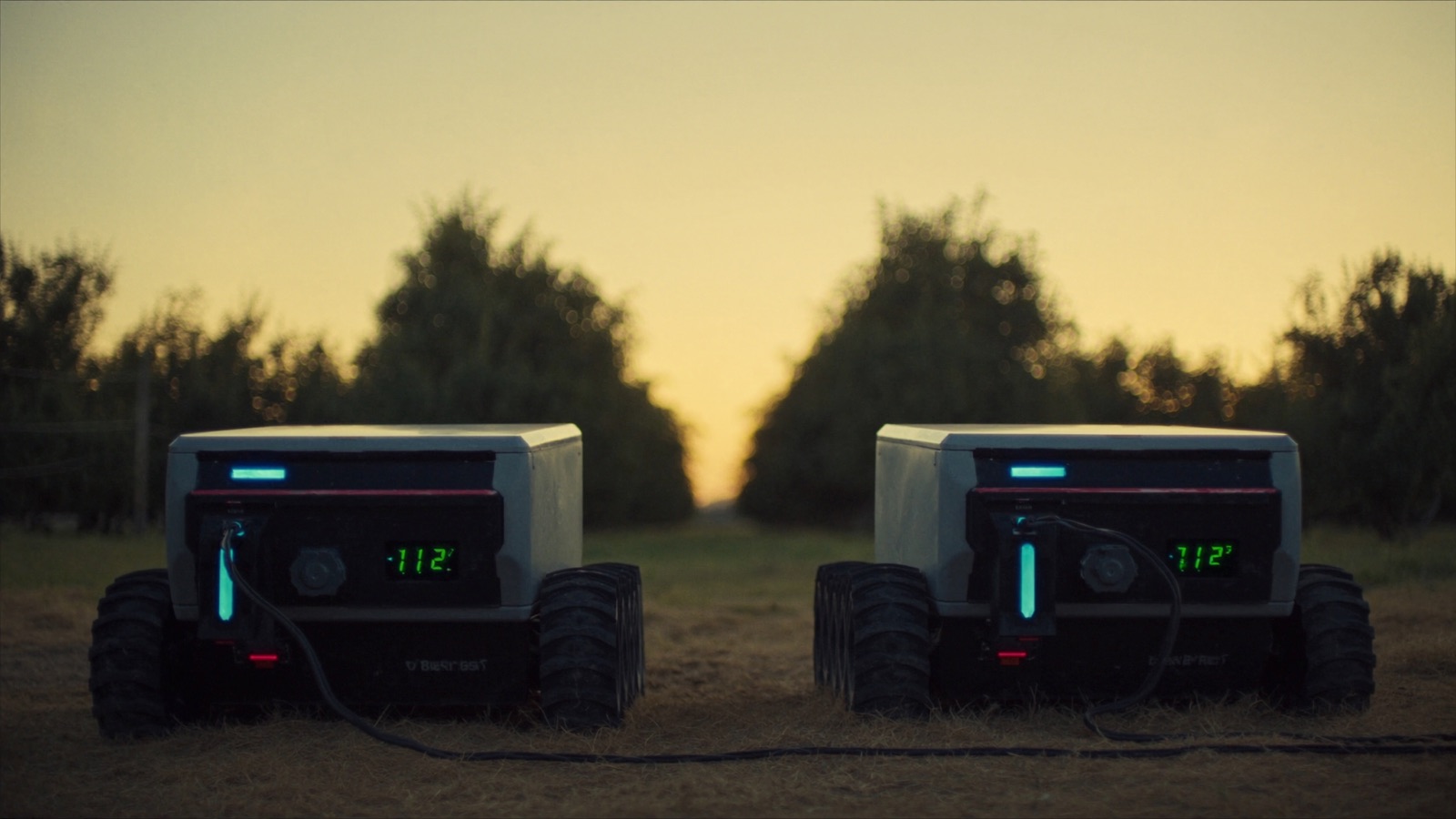

INS/LiDAR/RTK fusion: More advanced systems add inertial navigation (IMU) and LiDAR for environments where both GPS and vision are challenged — dense orchard canopy with both sky blockage and complex 3D structure. This is the direction Bonsai Robotics and some precision orchard platforms are heading.

Choosing the Right Localization Stack for Your Operation

| Crop / Environment | Recommended Primary | Secondary / Fusion |

|---|---|---|

| Row crops (corn, soy, wheat, cotton) >20-inch rows | RTK GPS | Vision for headlands and obstacle |

| Specialty vegetables (lettuce, broccoli, celery) | Vision (weed detection) | RTK for row navigation |

| Vineyards, inter-row | RTK GPS | Vision for end-of-row |

| Orchards, inter-row | RTK GPS | Vision for canopy obstacle |

| Orchards, under-canopy / harvest | Vision or LiDAR + vision | RTK for geo-reference only |

| Greenhouse / indoor vertical | Vision + LiDAR | No GPS needed |

| Strawberries, raised bed | Vision + RTK fusion | IMU for bed-edge tracking |

Decision questions to ask:

- Does my crop require plant-level discrimination (weed vs. crop, ripe vs. unripe)? If yes, vision is non-optional regardless of what else you use.

- Is RTK coverage reliable across my entire operating area, including field edges and any topographic features? Survey this — don't assume.

- How consistent is my crop geometry season to season? High consistency favors RTK-based systems; high variation favors vision-adaptive systems.

- Do I need the system to generate field-record data (where exactly did I spray, what did I see)? If yes, some form of GPS geo-referencing is required even for primarily vision-based systems.

- What are my typical operating conditions? Significant fog, dust, or night operation requirements mean the system's environmental specs need to be verified against your actual conditions, not the vendor's ideal-case specs.

Common Vendor Claims to Verify

"Works in all conditions": Ask specifically about performance in fog below 50m visibility, dust concentration above X micrograms/m³, and operating temperatures below 35°F. Get the operating envelope in the contract.

"Centimeter-accurate positioning": Ask whether this is RTK accuracy with a clear sky and base station within range, or a general claim. Ask what the accuracy is under your specific conditions.

"No base station required": This typically means the system uses a cellular RTK correction network. Confirm coverage at your farm location — many correction networks have gaps in rural areas.

"Self-updating AI models": Ask how often the weed detection model is retrained, whether your crop variety is in the training set, and what happens to accuracy when a new weed species enters your region.

Next in this series: field constraints that kill robot deployments — terrain, weather, crop spacing, and the site conditions no vendor demo surfaces.