Delivery Robot Vendor Selection: Insurance, Telemetry, and Fallback Procedures

The questions most RFPs miss — and why they matter more than demo performance.

Most delivery robot RFPs look like spec sheets. Navigation accuracy, payload capacity, battery range, operating temperature range, ingress protection rating. These specifications matter — but they are also the parameters every vendor optimizes for, because they know that's what buyers ask about.

The questions that actually differentiate vendors in live deployment are different. They're about what happens when something goes wrong: who carries the liability, what data you can access when an incident occurs, and how operations continue when a robot fails. These questions are harder to ask because vendors are not practiced at answering them honestly, and because buyers often feel like asking them implies distrust.

Ask them anyway. A vendor that gives clear, confident answers to the hard questions is a vendor that has thought through the operational realities of deployment. A vendor that hedges, deflects, or promises to get back to you is telling you something important about their operational maturity.

Insurance: The Questions Your Legal Team Won't Think to Ask

Who carries the liability?

For every incident a robot causes — a pedestrian trip, a blocked curb ramp, property damage — there is a liable party. In some vendor structures, the vendor carries all liability as the robot's owner and operator. In others, liability transfers to the client operator at contract signing. Most arrangements are somewhere in between, with shared liability that gets sorted out in the contract language.

Before you sign anything, your legal counsel needs to understand the liability structure for:

- Third-party bodily injury (someone is hurt by the robot)

- Third-party property damage (robot collides with a parked car, a storefront display)

- ADA violations (robot blocks accessibility infrastructure)

- Data breaches (robot telemetry or video footage is compromised)

- Workers' compensation (vendor's technicians working on your property)

Ask for the vendor's Certificate of Insurance (COI) and have your risk team review the coverage limits and exclusions. Request that your organization be named as an additional insured on the vendor's policy. Ask specifically whether ADA-related incidents are covered and what the coverage limit is.

What's the deductible structure, and who pays it?

Even if the vendor carries primary liability, there may be deductibles, co-pays, or claim thresholds that come back to the operator. Understand the cost structure before an incident, not after.

Does your own umbrella policy cover robot-related incidents?

Many commercial general liability policies have explicit exclusions for autonomous vehicles or unmanned systems. If you're the named operator of a delivery robot program — even on a vendor platform — you may have exposure that your existing policy doesn't cover. Get your broker's written opinion on this question before deployment.

What's the vendor's incident history?

Ask the vendor for their aggregate incident data: number of reported incidents per million deliveries, claim history in the past 24 months, ADA-related complaints. A vendor that won't share this data doesn't have a clean record. A vendor that shares it is giving you the actuarial basis for your own risk assessment.

Telemetry: What Data You Can Access and When

Do you own your operational data?

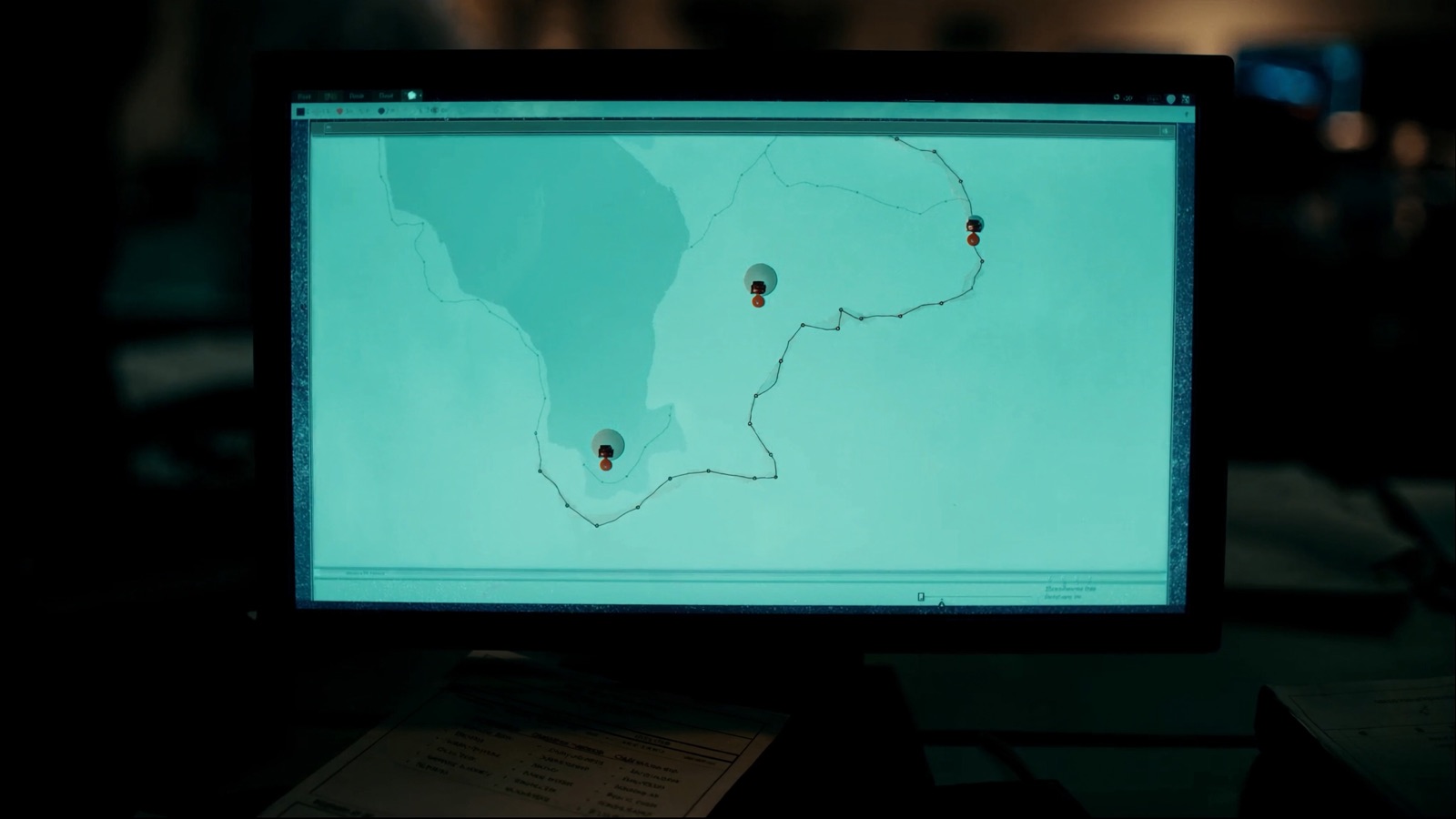

A delivery robot generates continuous telemetry: position, speed, obstacle detection events, human intervention flags, battery state, environmental sensor readings. That data is valuable — it's your record of what the robot did, when, and why.

Some vendors treat this data as proprietary to the platform and provide only summary reports. Others give clients full access to raw telemetry streams via an API. Others offer a middle ground — a client-facing dashboard with incident playback but no raw data export.

Understand your data rights before you commit. If an incident occurs and you need to reconstruct what happened for a liability defense, you need access to the robot's telemetry from the time of the incident. "We'll run an internal review and send you a report" is not the same as "here is raw telemetry access for the relevant time window."

Is video footage stored, and who controls it?

Most sidewalk delivery robots record video for navigation and safety purposes. Whether that footage is stored, for how long, in what format, and who can access it varies widely by vendor.

For outdoor deployments: if the robot records footage of public sidewalks and pedestrians, there may be privacy law implications in jurisdictions that regulate video surveillance or biometric data collection (California's CCPA, Illinois's BIPA). Understand whether the vendor's data handling practices create compliance exposure for you.

For hospital deployments: any recording in a clinical environment may implicate HIPAA. Verify that the robot's video and data handling is specifically reviewed for HIPAA compliance — not just "we're SOC 2 compliant" (which covers data security, not healthcare privacy).

What does the incident playback look like?

Before signing a contract, ask for a live demonstration of incident review on a past incident from an existing deployment. Not a staged demo — a real incident, with real data, walked through in real time. Ask to see how the vendor identifies what triggered an intervention, what the robot's sensor readings showed in the 30 seconds before the event, and how the resolution was documented.

If the vendor can't show you this, they either don't have the tooling or don't want you to see how it works. Both are concerning.

Fallback Procedures: What Happens When the Robot Stops

Three failure modes to ask about explicitly

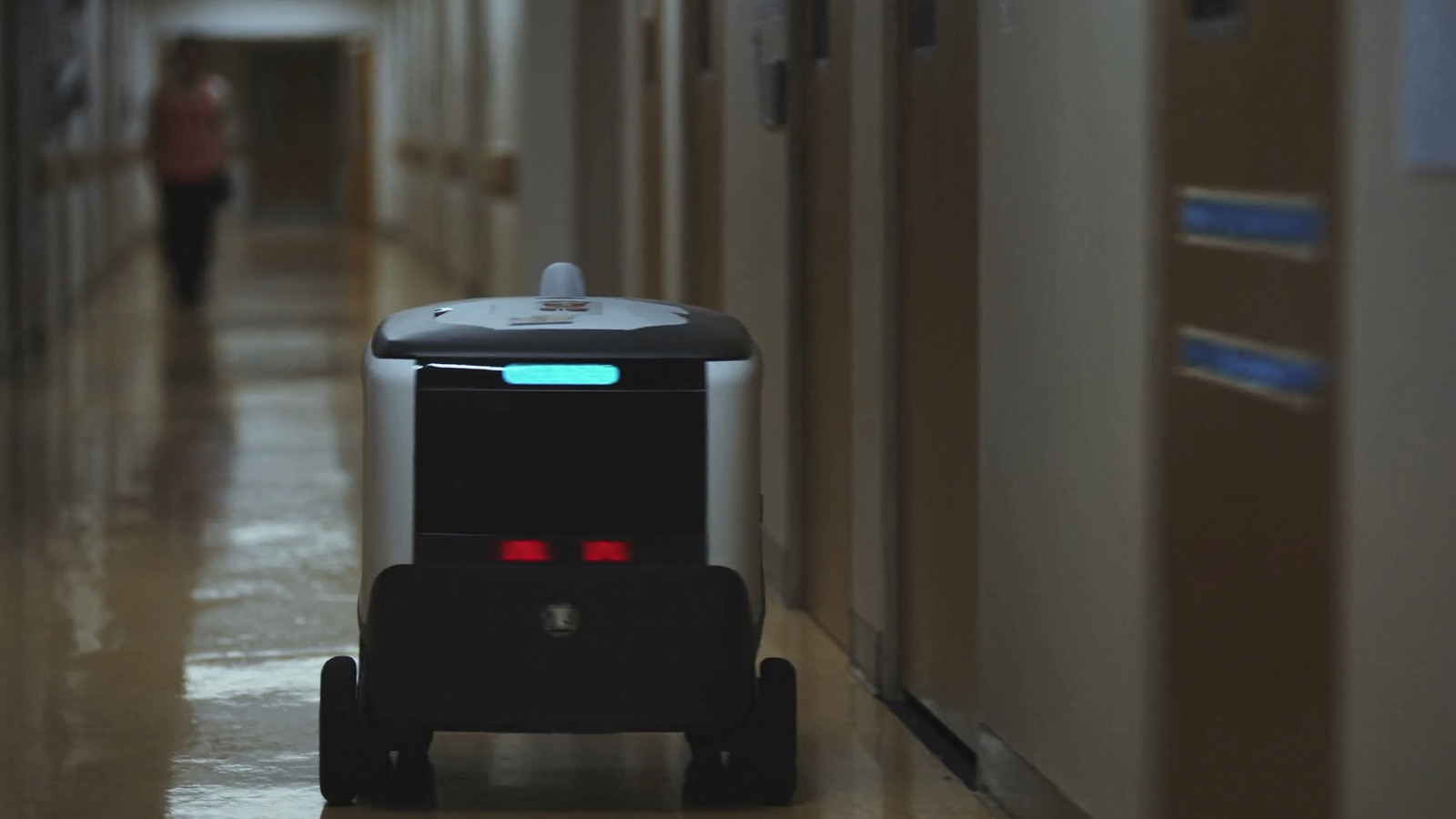

Mechanical failure: the robot stops moving due to a hardware issue (motor fault, sensor failure, battery problem). Who is responsible for retrieving the robot from the street or hallway? What's the vendor's SLA for field response? In a hospital corridor, a stationary robot blocking a medication path is a clinical issue, not just an equipment issue. In a public sidewalk, a stationary robot blocking a curb ramp is an ADA compliance issue.

Software failure: the robot stops or behaves unexpectedly due to a software bug, mapping error, or connectivity loss. Does it fail safe (stop and wait for intervention) or fail dangerous (continue moving without sensor input)? All vendors will say their robots fail safe; ask to see the documentation of how fail-safe behavior is implemented and tested.

Connectivity loss: the robot loses its cellular or wifi link mid-journey. What is its behavior? Can it complete the delivery autonomously without connectivity? Or does it require a connection to operate? If connectivity is required for operation, what is the backup plan for a delivery in progress when the connection drops?

Recovery logistics

A robot failure during peak delivery hours creates an operational gap. How is that gap filled? What's the escalation path from robot failure to human backup delivery? Who is responsible for dispatching the backup — the operator or the vendor?

For hospital deployments specifically: if the TUG robot goes offline, what's the manual fallback for urgent medication delivery? The answer cannot be "we'll wait for the vendor to restore service." There must be a documented protocol for reverting to manual processes that doesn't depend on how quickly the vendor's field team responds.

Software update procedures

Vendors push software updates to their robots — sometimes multiple times per week. Ask specifically:

- Do updates happen automatically or with operator notification?

- What is the update rollback procedure if an update causes a regression?

- Have any updates in the past 12 months caused operational disruptions at client sites?

The last question is the most useful. Any vendor running a live fleet at scale will have had at least one update that caused unexpected behavior in the field. How they handled it — and whether they proactively communicated it to clients — tells you more about the vendor relationship than any spec sheet.

Reference Site Validation

The most useful step in vendor selection is not evaluating the vendor — it's evaluating a site that is already running the vendor's system at scale.

Ask for three reference contacts at sites that:

- Are similar to your deployment in size and environment

- Have been running the vendor's robots for at least 12 continuous months

- Have experienced at least one significant incident (not pilots that have been incident-free)

The 12-month requirement matters because the first 90 days of a deployment are when the vendor is most attentive. Problems that emerge in months 4 through 12 — when the vendor's implementation team has moved to the next client — reveal the operational maturity of the platform and the support model.

The incident requirement matters because you need to know how the vendor behaves under pressure. A vendor that handled a crisis well is a vendor worth trusting. A vendor whose reference sites only have incident-free stories is giving you sales references, not operational references.

When you speak with references, ask specifically:

- "What's the worst thing that's happened, and how did the vendor handle it?"

- "What did you wish you had asked before signing?"

- "If you were running the evaluation again, what would you do differently?"

The answers to those three questions will tell you more than a vendor presentation.

Vendor Comparison Framework

Use this framework to score vendors across the four areas that matter in live deployment:

| Criterion | Weight | What good looks like |

|---|---|---|

| Insurance clarity | 25% | Clear liability allocation, COI provided, ADA coverage confirmed, your org can be named additional insured |

| Telemetry access | 25% | Client API access to raw telemetry, video retention policy documented, incident playback demonstrated on real data |

| Fallback procedures | 25% | Documented fail-safe behavior, published SLA for field response, manual backup protocol that doesn't require vendor |

| Reference site quality | 25% | 3+ references at similar sites with 12+ months operational, at least one with incident experience |

Technical specs (battery life, payload, navigation accuracy) are table stakes — every vendor in the market has acceptable specs for standard use cases. The differentiation is in the operational and contractual details.

A vendor that scores well on all four criteria is a vendor you can trust with a live deployment. A vendor that scores well on technical specs but poorly on one or more operational criteria is a vendor that will perform beautifully in the demo and cause you problems when something goes wrong in the field.

After the Selection Decision

Once you've selected a vendor, negotiate the contract before you sign — not after. The key commercial terms to get in writing:

- Liability allocation (which party carries what, with specific coverage amounts)

- Data ownership and telemetry access (what format, what access level, for how long)

- SLA for field response (hours to on-site, hours to resolution, penalty for breach)

- Update notification (minimum notice period before software updates)

- Pilot kill criteria (as discussed in the pilot playbook article)

- Exit terms (what happens to the hardware and data if you terminate the agreement)

The contract negotiation is where vendors reveal how they think about the relationship. A vendor that pushes back on reasonable SLA terms or data access provisions is a vendor that knows their support model can't meet reasonable expectations.

The exit terms are particularly worth reading carefully. In a hardware-purchase model, you own the robot — but you may not own the maps, the fleet management software, or the operational data. In a RaaS model, everything reverts to the vendor when the contract ends. Know what you're left with if the relationship doesn't work out.

Running a delivery robot program at scale is a multi-year commitment. The vendor you choose is an operational partner for that period. Evaluate them accordingly.