Last-Mile Delivery Pilots: 90 Days to a Decision

A pilot that can't produce a clear yes or no in 90 days isn't a pilot. It's a vendor relationship.

The most common outcome of a delivery robot pilot is not a clear yes or a clear no. It's an extension request.

At day 90, the vendor presents a summary showing the robot performed well in several areas, ran into some software issues that have since been fixed, and will definitely hit the utilization target in the next 60 days. The operations manager is uncertain — the data is genuinely mixed. The program gets extended. Sixty days later, it gets extended again. Two more cycles and it's been six months, no decision has been made, and the robot is now so embedded in the operation that removing it feels disruptive even though the business case was never validated.

That is not a pilot. That is a vendor relationship without an exit.

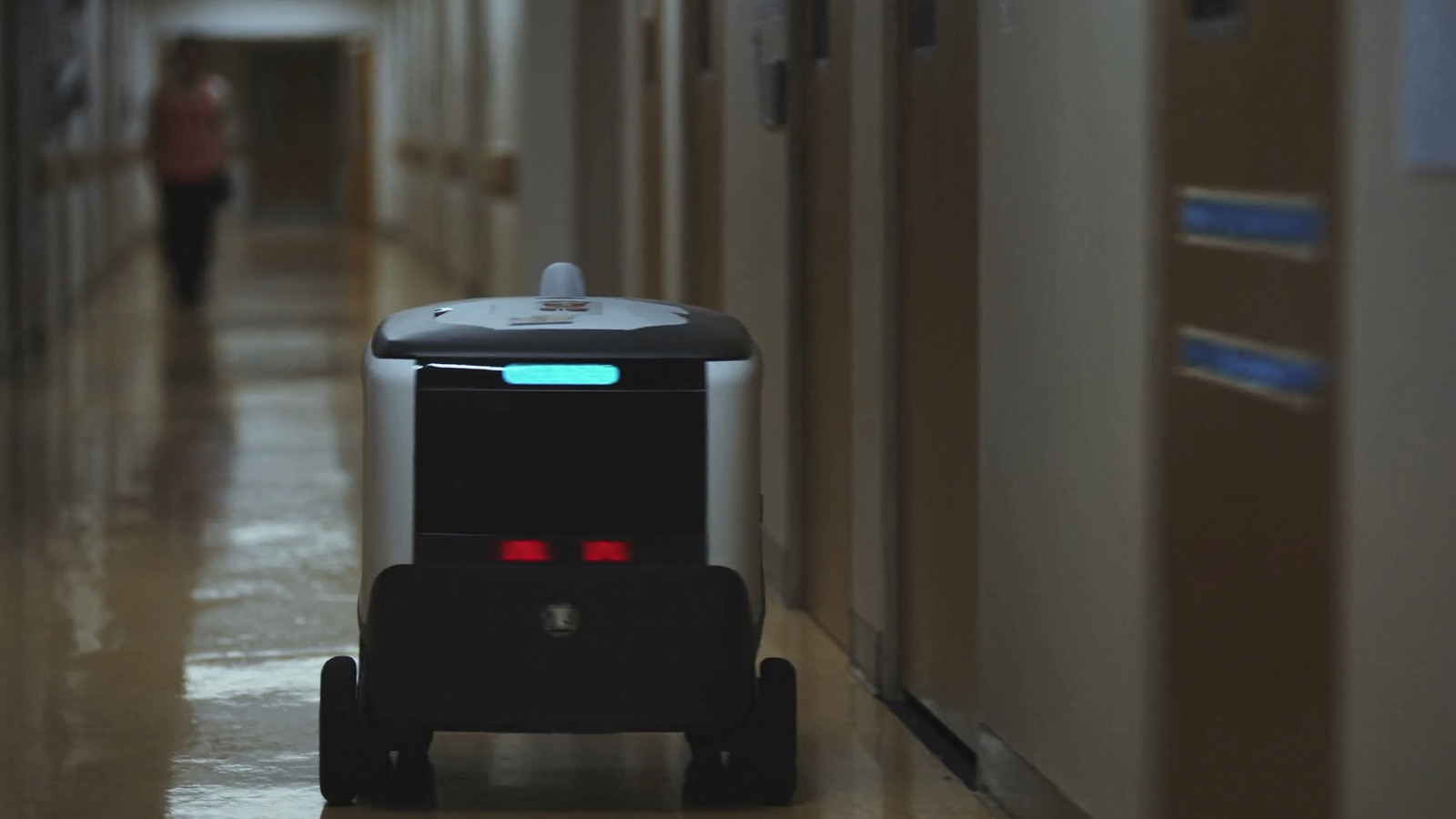

The discipline of running a pilot that forces a decision is the same whether you're deploying on city sidewalks or in a hospital corridor. The specifics differ — but the structure is identical.

The Non-Negotiables Before Day 1

Define success in writing

This is the most important step in the pilot process, and it happens before the robot arrives.

Write down exactly what the robot must do — in quantitative terms — for the pilot to result in a "proceed" recommendation. Three KPIs is the right number. More than three and you're measuring everything; fewer than three and you're not measuring enough.

Your three KPIs should cover: cost efficiency, reliability, and operational fit. Examples:

| Use case | Cost KPI | Reliability KPI | Operational KPI |

|---|---|---|---|

| Sidewalk food delivery | Per-delivery cost ≤ [X]% of current courier cost | Successful delivery rate ≥ 92% (no assist required) | Avg. delivery time ≤ 35 min |

| Hospital supply runs | Supply transport FTE hours reduced by ≥ 15% | Trip completion rate ≥ 95% | Staff complaint rate ≤ 2 per week |

| Hotel amenity delivery | Amenity delivery cost ≤ $[Y] per delivery | Delivery success rate ≥ 90% | Guest satisfaction score maintained |

The KPIs go into a written document signed by whoever has budget authority for the program. Finance director, VP of Operations, whoever owns the business case. If the KPIs can't get signed, you don't yet have internal alignment, and a 90-day pilot will not produce a decision.

Measure the baseline before the robot arrives

Run two full weeks of normal operations before the robot starts. Measure your current KPIs using the same methodology you'll use during the pilot. That baseline is the only honest comparison point.

If you skip the baseline, you will have 90 days of robot performance data and nothing to compare it to. At that point, the vendor's framing of the results is the only framing available — and vendors are skilled at finding favorable comparisons.

For outdoor deployments, pull your courier cost data by zone and time window. Don't use a blended average; use the specific delivery segment the robot will replace.

For hospital deployments, pull supply transport labor hours broken down by shift and task category. For hotel deployments, log amenity delivery requests, response times, and labor cost per delivery for the baseline period.

Define kill criteria

Write down what outcome at day 90 triggers a "kill" decision — not an extension request, not a committee review. Something like: "If successful delivery rate is below 88% at week 12, the program does not proceed to Phase 2 regardless of vendor explanation."

Kill criteria take the vendor out of the decision loop. They were negotiated and signed before the pilot started, so the outcome at day 90 is measurable against a fixed target rather than subject to interpretation.

Vendors will push back on this. A vendor that refuses to accept written kill criteria in a pilot contract is telling you something important about how they plan to manage the relationship.

Week-by-Week Pilot Structure

Weeks 1–2: Controlled launch

Run a deliberately limited scope. For sidewalk delivery: one merchant partner, one delivery zone, peak hours only (lunch + dinner). For hospital: one department, one delivery task category. For hotel: amenity deliveries on one wing, one shift.

The purpose of weeks 1–2 is not to maximize deliveries — it's to find the problems. Constrained scope means problems are easier to isolate. A problem in week 2 that affects one delivery zone is solvable. A problem in week 2 that affects 10 zones and 5 merchant partners is a crisis.

Track everything: every trip, every intervention (human had to take over), every incident, every robot stop. The data from weeks 1–2 is the most important data in the pilot — it shows you the failure modes before you've scaled.

Weeks 3–6: Operational baseline

Expand to the full planned scope: all merchant partners, all delivery zones, full operating hours. This is when you're generating the data that will actually be compared against your KPIs.

Schedule a formal weekly review — 30 minutes, same people each week, the same data package each week. Compare current performance to the baseline and to the pilot targets. Log any variance and the explanation for it.

This is also when you assess staff response. For indoor deployments: are staff using the robot as designed, or routing around it? For outdoor: are merchant staff correctly staging items for pickup, or leaving them in incorrect locations? Early behavioral drift from staff almost always precedes pilot failure — catch it in week 3, not week 9.

Weeks 7–10: Stress test

Introduce the conditions the vendor didn't optimize for.

For sidewalk deployments: high-traffic weekend afternoons, special events, wet pavement conditions, construction detours. For hospital: shift change, high-census days, emergency situations. For hotel: holiday weekends, large group check-ins.

If the robot was only tested in its vendor-optimized conditions during weeks 3–6, you've learned how it performs in normal operations. You haven't learned how it handles the 20% of conditions that are abnormal. That 20% is where failures concentrate.

Weeks 11–12: Decision preparation

No new experiments. Run at steady state and finalize the data. Have your finance contact run the full TCO model using actual cost data from the pilot (not vendor projections): actual supervision hours, actual cellular costs, actual maintenance incidents, actual charging infrastructure usage.

Compare final performance against your three KPIs and your kill criteria. The decision should be deterministic: if KPIs are met, proceed to Phase 2 planning. If kill criteria are triggered, end the program. If results are mixed — some KPIs met, some not — that's a genuine judgment call, but it should be made by the people who signed the KPI document, not by the vendor.

The Vendor Extension Conversation

You will have this conversation. Prepare for it.

The vendor will point to the areas where the pilot succeeded and argue that the areas where it fell short are fixable with more time. They will have a plausible explanation for every underperformance — software update in progress, unusual weather, merchant partner not yet fully onboarded.

Your response should be the signed kill criteria document.

If the program failed to meet a criterion that was agreed upon before the pilot started, the question is not "can the vendor fix this" — it's "was this criterion reasonable when we set it?" If it was reasonable then, the appropriate response to missing it is to end the program, not to extend it under the assumption that the next 60 days will be different.

A well-structured pilot that results in a clear "no" is valuable. It tells you that this vendor, this use case, this geography — not now. That is information that has cost and has saved you from a larger commitment. A vendor that treats a clean "no" as a sales recovery opportunity rather than a legitimate business outcome is not a vendor you want for a long-term relationship.

Quick Reference: Pre-Pilot Checklist

30 days before robot arrives:

- Three KPIs written and signed by budget authority

- Kill criteria written and signed

- Baseline measurement period defined and started

- Vendor contract includes kill criteria and data reporting requirements

- Wifi or cellular coverage audit completed for deployment zone

- Staff briefing scheduled (mandatory attendance)

Day 1:

- Baseline measurement complete (2 full weeks of data)

- Incident logging system in place (who logs, what format, where)

- Weekly review calendar blocked with correct attendees

- Vendor escalation contact confirmed (who to call, what SLA)

Week 6:

- Mid-pilot review against KPIs

- Staff feedback survey completed

- Any software or operational adjustments documented

Week 12:

- Final performance vs. KPI comparison complete

- Full TCO model updated with actual pilot costs

- Decision made: proceed, kill, or (with documented justification) limited extension

The next article covers the vendor selection process — specifically the questions about insurance, telemetry, and fallback procedures that most RFPs miss.